Usability has been a concept known since the 14th century when the word ‘usable’ was first used. Nowadays, achieving usability involves various research methods, from traditional usability testing to newer approaches like unmoderated UX assessments.

Back in 2003, the Nielsen Norman Group published an article on “Return on Investment for Usability“, which showed significant improvements in sales conversion rates (100%), user productivity (161%), and the use of specific features (202%) after investing around 10% in usability per project. However, after 20 years, can we still expect the same results?

The short answer is no. This is a positive sign of maturity in the field. The article “Average UX Improvements Are Shrinking” explains that we have learned a lot about what works and what doesn’t in the past two decades. However, this doesn’t mean that UX has less importance. There is still ample room for improvement, as users’ expectations have significantly increased due to the investment in UX. These expectations will continue to rise, and a service designed today may not be accepted in five years’ time.

To ensure a return on investment in UX, it’s crucial to identify the best areas to invest in. However, allocating and spending a usability budget doesn’t guarantee returns, especially if it’s done too late in the project. Making significant improvements becomes challenging, especially after months of interface and code development. This article seeks to answer the question: “when is the right time to spend your usability budget?”

Before development

To get the best return on investment, allocate some budget before programmers or graphic designers start their work. This early stage allows cost-effective exploration of design solutions with users, avoiding potential problems and saving resources. Conducting usability analysis ensures accurate user needs specification and improves cost estimation for design development. In one study*, a 70% increase in specification costs led to an 18% reduction in overall costs, even considering the extra spent on specification.

Recommendation

Describe what the users will do (with the support of the technology), not what the technology does or is. Then, develop functional and technical specifications. Then, draw up the project development plans.

During development

To ensure a good return on investment, it’s beneficial to allocate some budget during development. Gathering feedback from users who interact with a prototype of the proposed system significantly improves the design’s chances of success in the real world.

Predicting how people will use a design is challenging since technology usage can be unpredictable and may evolve into new social customs. User requirements change over time, especially before and after a design is created. By testing prototypes during development, designers gain a better understanding of how the design can be used as it evolves. This facilitates easier cost-benefit analysis, enabling business managers to commercialise the design more effectively.

Recommendation

Review the user requirements specification constantly. Then, incorporate additional user requirements as they are identified during the development process. Then, manage the costs and benefits of designing for these requirements.

After development

Spending all of the budget after development will ensure a moderate return on investment. Testing after development always illustrates how every design is imperfect and can be improved. Even if there is little time or budget to make these improvements, some improvements can still be made. This will improve the performance of the system created. But the cost of making a design change after development can be up to 100 times more than making the same change before development. As such, fewer design solutions are economically viable at this stage. Despite the benefits, often only some will get implemented.

Sometimes even well managed projects do not catch design issues until this late stage. The £18m Millennium Bridge, a footbridge crossing the River Thames in London, was designed and built in 2000 by Arup, a leading engineering firm. The bridge had to be closed after two days because the number of people walking over it caused it to sway – not up and down as might be expected, but from side to side. This was a design error that was not identified before or during development, but it had to be fixed – whatever the cost. After all, it was the first pedestrian bridge across the Thames for over 100 years.

Recommendation

Use cost-benefit analysis to choose which parts of the design to improve.

Conclusion

By prioritising usability throughout every stage of the development cycle, designers can explore and test solutions in a cost-effective manner. This approach enables better cost management and understanding of the business benefits of the design. Delaying usability considerations until the end of development can result in painful cost-benefit analyses of design improvements, as seen in the case of the Millennium Bridge, where additional funds were needed to fix issues after its opening was delayed.

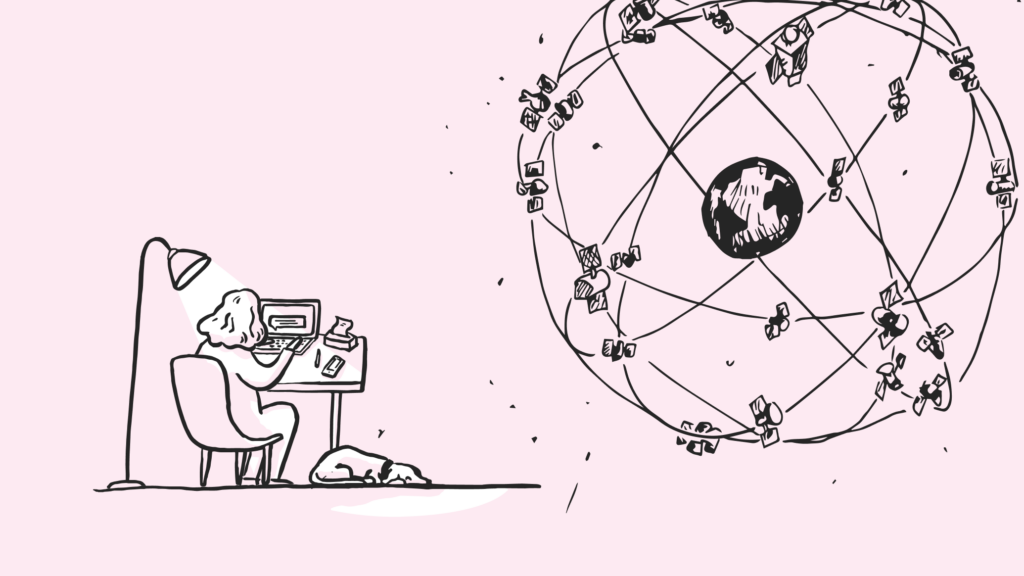

To ensure usability, various techniques can be employed, with human-centred design being one of them. Starting early, testing frequently, and continuously monitoring leads to more effective technology that aligns with user behaviour, resulting in the best returns on usability investments when applied early on.

Can we help you?

Investing in user research at any stage will always uncover ways to improve a design, leading to significant benefits for both the user experience and the bottom line. Here at Nomensa, our team of experts can help you plan and prioritise your usability spend and ultimately create a better experience for your users. Get in touch today to learn more about how we can help.

* Lederer and Prassad, 1992. “Nine management guidelines for better cost estimating.” Communications of the ACM, 35(2), 51-59.

We drive commercial value for our clients by creating experiences that engage and delight the people they touch.

Email us:

hello@nomensa.com

Call us:

+44 (0) 117 929 7333